Reading: Agent Instruction Design and Prompt Engineering

Estimated time: 8 minutes

Learning objectives:

- Explain how agent instructions differ from traditional prompts and how they support autonomy, reasoning, and task management.

- Describe key patterns for agent goal-setting, escalation, memory management, and tool selection in business automation contexts.

Introduction

As generative AI agents become more capable, prompting isn't just about getting one good response but designing instructions that guide ongoing behavior. When you build an agent, you define how it should think and act. And when you connect it to tools, you're also responsible for instructing how those tools should behave. This reading explores two critical layers of prompting: agent prompting and tool prompting.

How agent instructions differ from traditional prompts

Traditional prompt engineering focuses on crafting questions or commands that elicit a single, high-quality response from a generative AI model. For example, a prompt like 'Summarize the following article in three sentences' is clear, concise, and expects a one-time output.

In contrast, agent instruction design must support ongoing autonomy and interaction. Agents are expected to make decisions, manage multi-step processes, and adapt to changing contexts without constant human intervention. Their instructions should provide frameworks for behavior that allow for flexible, context-aware action.

For example, instead of scripting out a step-by-step workflow like "Monitor the inbox, assess messages, draft replies, and escalate when needed," an agent prompt would define the broader intent:

"You are a customer communication assistant. Monitor the inbox for client messages. Respond to routine inquiries using prior correspondence for context. Escalate complex or unfamiliar issues to a human support representative."

This type of prompt sets the agent's role, purpose, and boundaries while allowing it to reason about how to carry out those tasks. It empowers the agent to act independently, use available tools as needed, and involve a human only when necessary.

How agent prompting works

Agent prompting is the process of defining an AI agent's role, objectives, and behavioral boundaries. Unlike traditional prompting—which typically asks a model to perform a single task like summarizing text or answering a question—agent prompting establishes the broader context for autonomous decision-making. It tells the agent what it is, what it's responsible for, and how it should behave as it works toward a goal.

For example, if you're building an AI agent to support a product launch, the prompt might look like this:

"You are a launch operations agent for a tech startup. Your goal is to monitor incoming feedback from early users across email, chat, and social media. Summarize key insights daily for the product team, tag urgent issues, and use internal documentation to respond when appropriate. Escalate anything related to bugs or security to the engineering lead immediately."

This prompt doesn't script every action the agent should take. Instead, it defines the mission, available resources, tone, and when to involve humans, giving the agent the autonomy to act on new information, reason through decisions, and operate independently within guardrails.

Effective agent prompts generally include:

- A clear role and purpose

- Access to specific tools or systems

- Behavioral guidelines (tone, priorities, or decision preferences)

- Escalation paths or fallback conditions

By providing this structured autonomy, agent prompting enables generative AI systems to function intelligently in fast-moving, high-stakes environments, not just repeat static tasks.

Defining agent goals and objectives for independent reasoning

Once you understand how agent prompting differs from traditional prompts, the next step is crafting clear objectives that guide how the agent thinks and acts. A well-defined agent prompt should give the system enough context to reason about its tasks, use the appropriate tools, and make decisions without constant human direction.

For example, instead of telling the agent, "Check each calendar for availability and send a meeting invite," you'd define a broader goal like:

"You are a scheduling assistant. Help users book meetings at mutually convenient times. Prioritize speed and clarity. Use calendar and email tools, and escalate if scheduling conflicts cannot be resolved."

This kind of objective-based prompting allows the agent to determine how to achieve the goal by querying multiple calendars, proposing alternative time slots, or using fallback methods if a preferred time isn't available. The prompt doesn't prescribe every action. Instead, it gives the agent direction, autonomy, and criteria for when to ask for help.

These agent-level goals serve as a strategic guide—like a mission statement—helping the agent reason through unfamiliar situations and use tools intelligently to complete its tasks.

What tool prompting adds

While agent prompting defines the overall goals and behavior of an AI agent, tool prompting defines how the tools connected to the agent should operate when called upon. These tool prompts are created by the agent designer and live inside the tools themselves. They tell the tool exactly how to perform its specific task when triggered by the agent.

For example, if your agent uses a summarization tool, the prompt embedded in that tool might be:

"Summarize this customer email in one sentence for internal tracking."

For a search tool, it could be:

"Return the most relevant help article for this error message: '{{error_text}}'."

Each tool is configured with a clear, focused prompt—tuned for its specific purpose. The agent doesn't write these prompts but chooses which tool to run based on context. Tool prompts make the agent's actions more consistent, more reliable, and easier to control.

How agents use tool prompts autonomously

Once the agent receives an instruction or detects a trigger, it determines what actions are needed to complete the task. Based on its reasoning, it chooses the appropriate tools—each one preloaded with its own specialized prompt or programmed function. The agent runs those tools at the right moments and integrates their outputs into the larger workflow.

Let's say you've built an agent to manage event coordination. The agent prompt might be:

"You are an event assistant. Help organize meetings, coordinate invites, and send reminders. Use calendar, communication, and task-tracking tools as needed."

From this high-level instruction, the agent determines which steps to take. For example:

- It might run a scheduling tool that reaches out to another calendar tool with the pre-set prompt:

"Find the next 3 available time slots for a 60-minute session after 10 AM this week."

- Then call a communication tool with its configured prompt:

"Send an email to all invitees confirming the selected time and sharing event details."

The agent selects tools with prewritten prompts or functions, executes them, and uses the results to move the task forward. This combination of autonomous reasoning and controlled tool behavior makes AI agents flexible and reliable.

Techniques for escalation, error handling, and managing multi-step processes

Even well-configured agents will encounter edge cases, unexpected errors, or ambiguous situations. That's why both agent and tool prompts need to account for escalation and fallback behavior, defining what the system should do when it can't proceed confidently.

These behaviors start in the agent prompt, where you outline when the agent should hand off to a human or raise an alert:

"If you cannot complete a task after two attempts or encounter conflicting information, escalate to a human and summarize the issue."

But tool prompts can also include fallback logic, especially when tools are prone to failures or API timeouts. For example, a CRM tool prompt might include:

"If the CRM API fails, retry once. If still unavailable, return 'System error – escalate to support.'"

By building escalation logic into both agent and tool levels, you allow the system to handle uncertainty, fail gracefully, and keep humans in the loop when needed.

Applying no-code AI automation tools to build a simple agent

With a foundational understanding of agent instruction design, you can apply these concepts using no-code AI automation platforms. These tools empower professionals to build agents without deep programming expertise and often provide visual interfaces for defining agent instructions, memory settings, tool integrations, and escalation workflows.

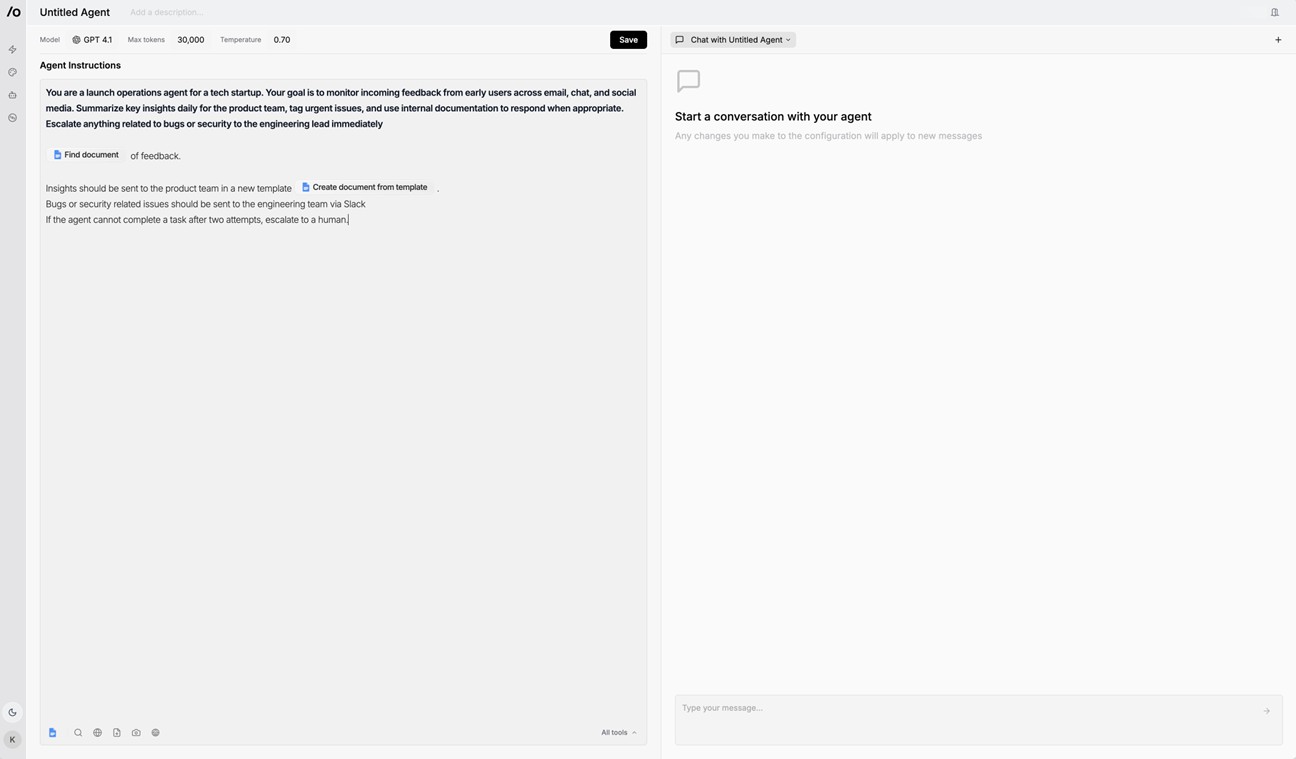

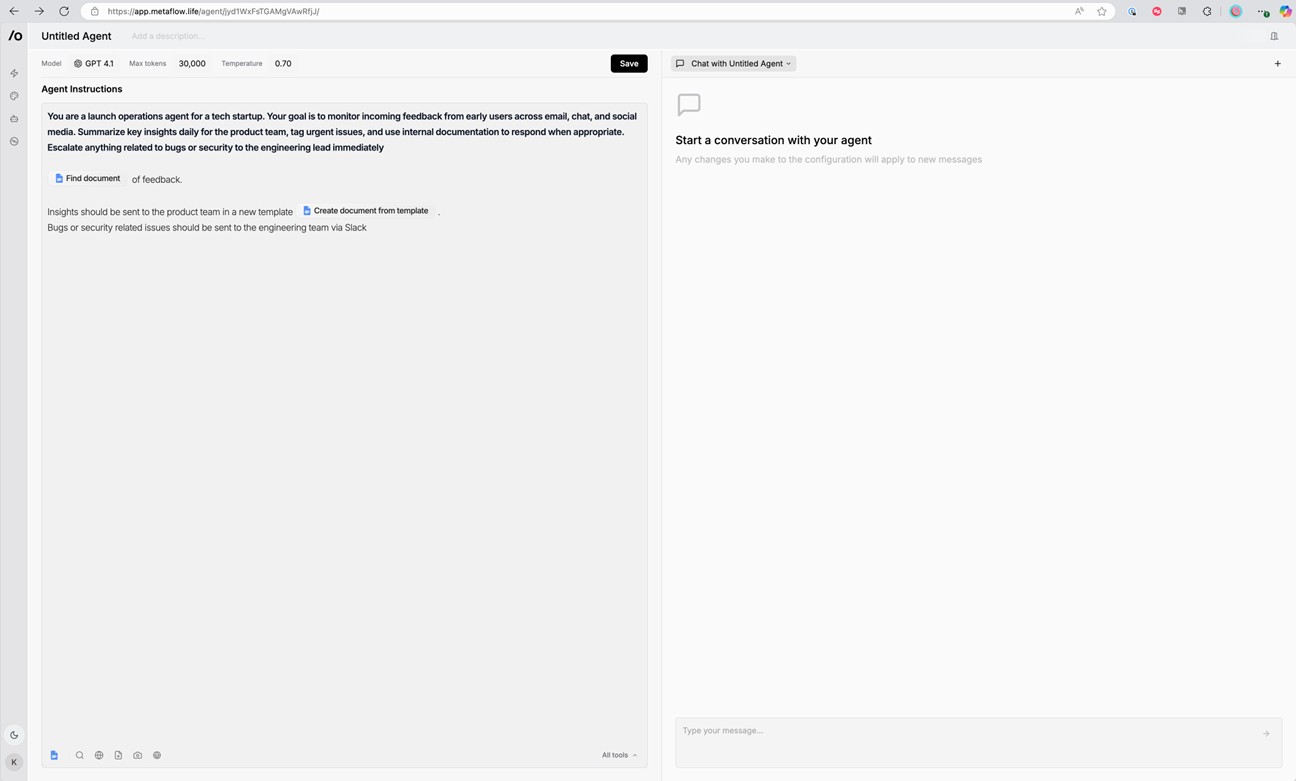

Building agents in Metaflow (step-by-step)

Step 1: Create a new agent

- From the Metaflow welcome screen, click “Create an Agent.”

- This opens a blank canvas with a field for Agent Instructions.

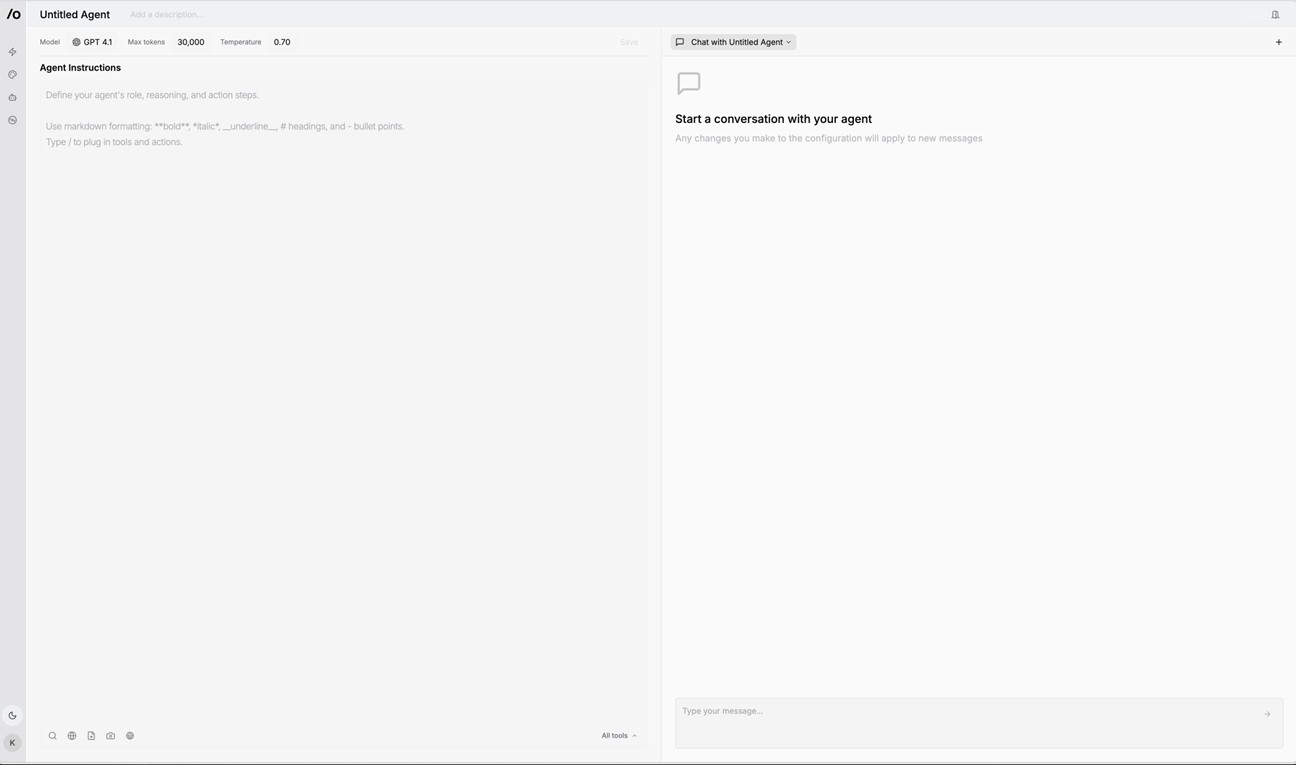

Step 2: Write the agent prompt

- In the left panel, write a clear, high-level instruction that defines:

- The agent's role

- The task it needs to complete

- Any tools it will use

- Escalation logic, if needed

Example:

You are a launch operations agent for a tech startup. Monitor incoming feedback from early users via email, chat, and social media. Summarize key insights daily, tag urgent issues, and use internal documentation to respond when appropriate. Escalate bugs or security issues to engineering immediately.

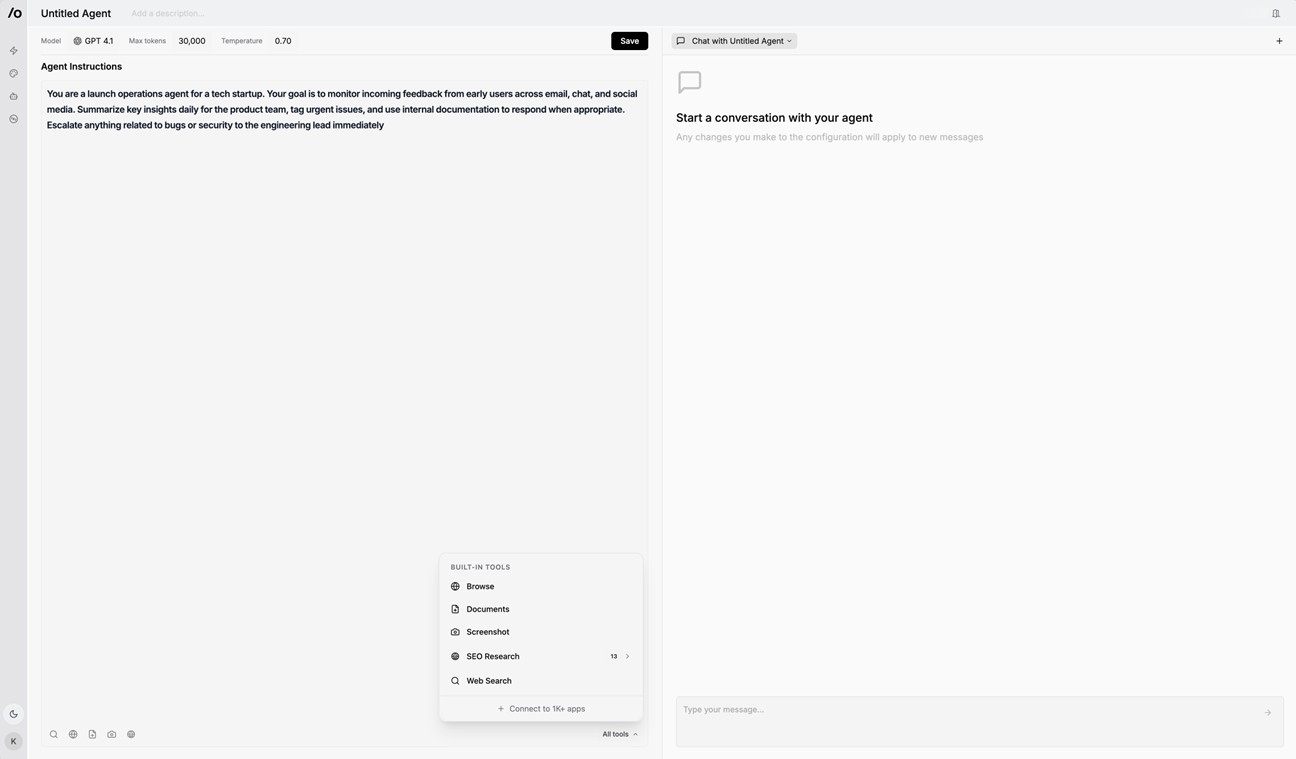

Step 3: Add tools to the agent

- Type/click "All tools" in the lower left corner.

- Choose built-in tools (e.g., Browse, Documents, Web Search, SEO, and Screenshot) or connect external apps (e.g., Gmail, Slack, Notion) through MCP integrations.

Step 4: Define escalation logic or conditions (optional)

- In the Agent Prompt, include logic like:

"If the agent cannot complete a task after two attempts, escalate to a human."

- You can also use conditions to trigger tool actions based on outcomes (e.g., escalate to Slack if a bug is detected).

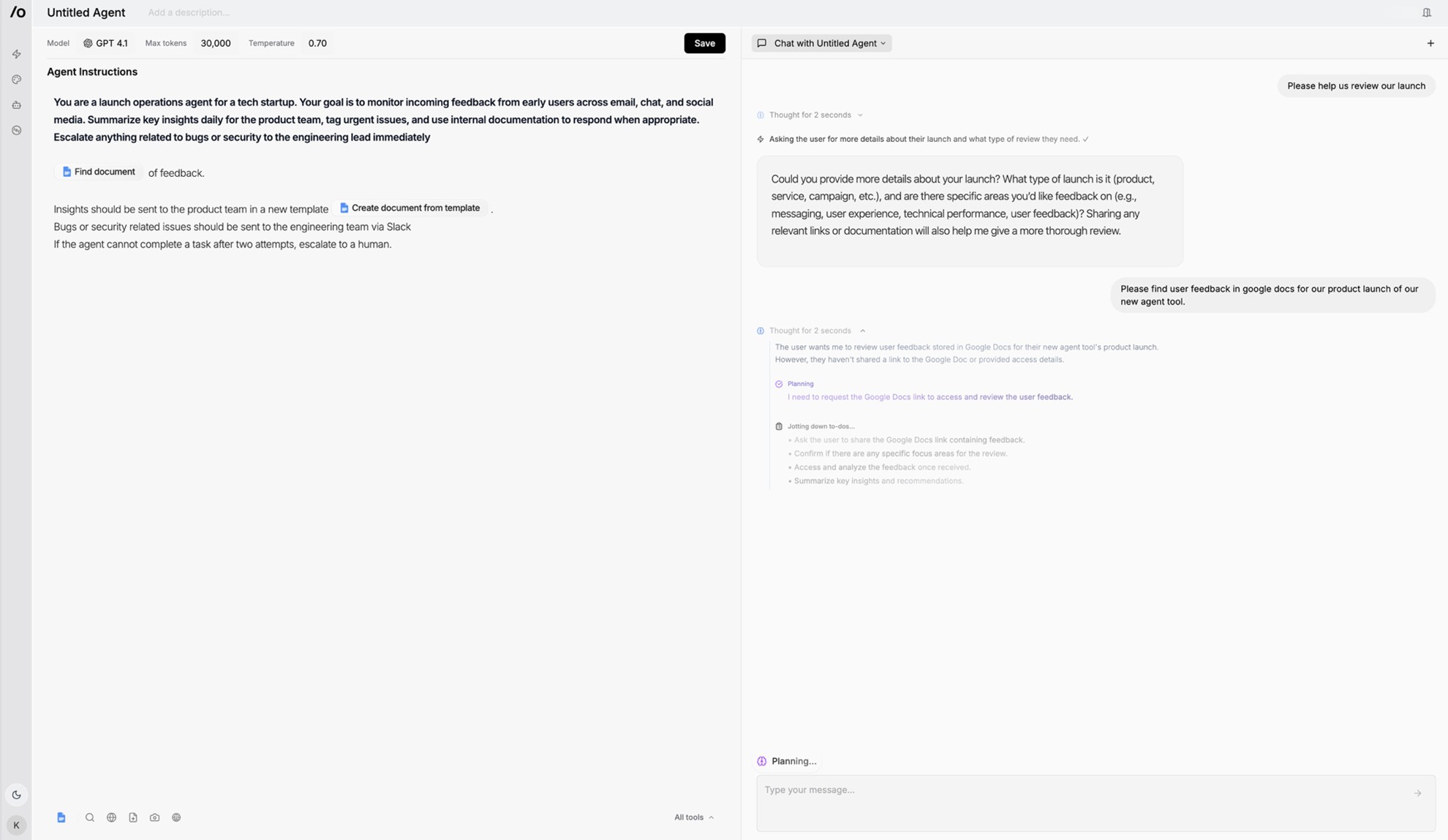

Step 5: Test the agent

- Once your prompt and tools are configured, test the agent by starting a conversation in the right-hand chat panel.

- Try real-world inputs to validate reasoning and tool execution.

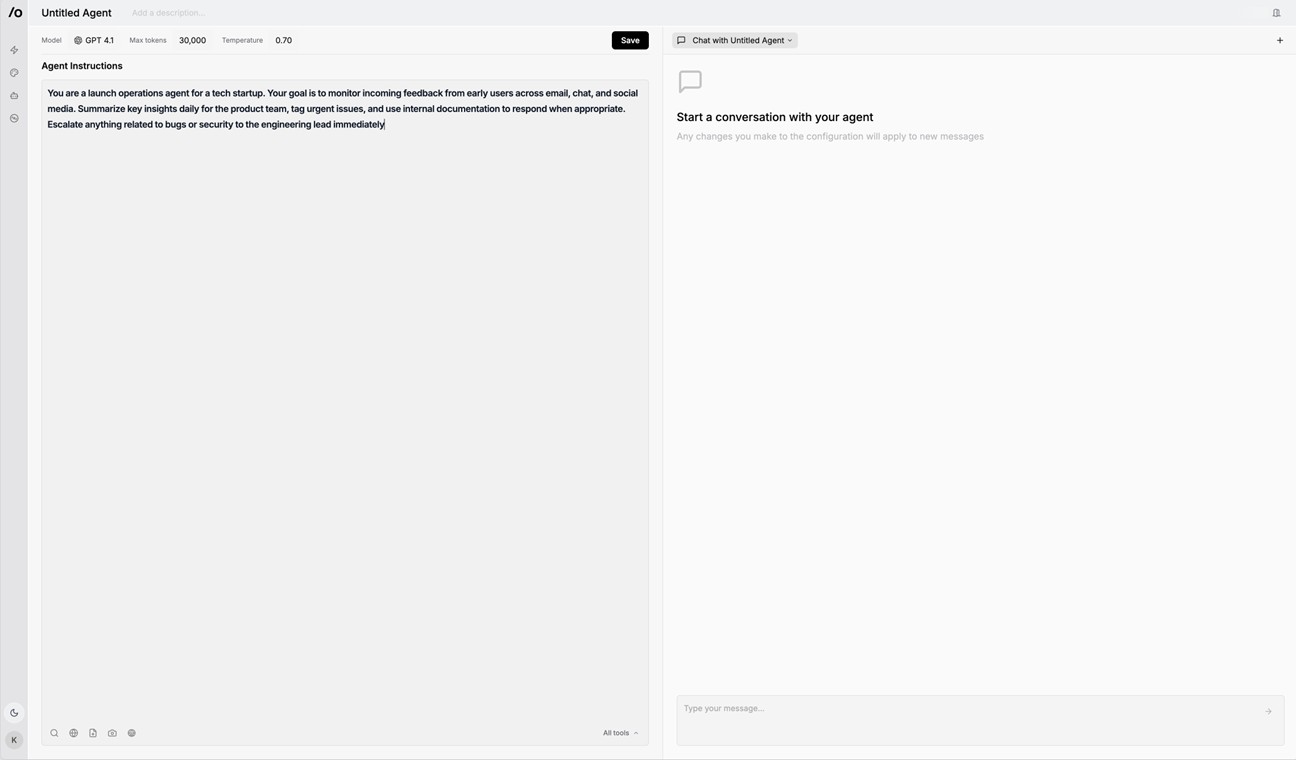

Step 6: Save and iterate

- Click Save in the top right corner to lock in your configuration.

- Revisit your agent anytime to refine prompts, add tools, or adjust workflows.

Summary

Designing instructions for generative AI agents requires moving beyond single-response prompts to frameworks that support autonomy, reasoning, and ongoing task management. Clear goals, escalation protocols, and patterns for memory and tool use enable agents to operate independently and reliably in complex workflows. No-code platforms further simplify building such agents, making advanced AI automation accessible to professionals without programming expertise.